Herein you’ll find articles on a very wide variety of topics about technology in the consumer space (mostly) and items of personal interest to me. I have also participated in and created several podcasts most notably Pragmatic and Causality and all of my podcasts can be found at The Engineered Network.

47 At A Test Match Finally

Growing up in Rockhampton, a 7 hour drive from the state capital of Brisbane, it meant that you missed out on a lot of great things that never made it to regional Queensland. That could be concerts, plays, musicals, or sports matches and more.

My favourite game to play and watch for as long as I can remember was cricket. As a teenager I’d take the plastic case cricket ball up to the nets and practice bowling for hours on end with my friends and in some cases even by myself. As limited overs cricket was taking off in a big way in the late 80s and early 90s, I’d watch the One Day Internationals (ODIs) on the TV in the dining room with the headphones on (never allowed on the “big” TV because my mother hated cricket).

I started taking more of an interest in Test Cricket watching several games on TV in different cities around Australia and started developing a love for the original format of the game. Five days in the field, three, two-hour sessions each day was a lot of play time. The strategy was very different and it was, for me at least, much more interesting to watch. For the players it truly was a “Test” of their skill and endurance and lives up to its name.

In January of 1990 a rare thing happened in my home town - Sri Lanka’s test side were playing at the Rockhampton Cricket Ground (next to Callaghan Park horse-track) and my mother relented to my incessant nagging and stumped up the money for tickets for me to go with my grandfather to watch the Queensland XI vs Sri Lanka. It was a 2-day game and I didn’t have the attention span I do now, but the biggest thrill was meeting the Australian Wicket Keeper, Ian Healy. On the Queensland side we had other Australian Test Match players including Craig McDermott, Stuart Law, Greg Richtie and Michael Kasprowicz. I was lucky to get Ian Healy and Stuart Law’s autographs…I still have them today.

Fresh from that experience I defied my mothers wishes (somewhat) and was up at the crack of sparrows watching as much of the Australian tour of the West Indies as I could manage, in 1991. Watching the Aussies play against the mighty West Indies in Jamaica, Gyana, Port of Spain, Barbados and Antigua was eye-opening. The wickets, the grand stands and the atmosphere was totally different from what I’d seen in Australia. I couldn’t watch every day though, since I had school.

A lot of things happened: started at Uni, went to Canada, I got my degree, went to Canada again, came back to Australia and ended up in Brisbane, got married and had four kids. (I know, that’s a lot…) When in Brisbane there were many opportunities to see international matches but I didn’t feel like it was an experience to had by myself, so I decided to go with my brother in law, who was English, to watch England vs Sri Lanka in December of 2002 since my wife (like my mother) hated cricket. It was at the Gabba and it was fine, but there was something about watching your own country play that was missing.

And so it wasn’t until my third-born, Benjamin, who started making a name as an opening Fast Bowler for the local junior cricket club and my oldest, Madeline, started coming to watch Ben bowl that things started to shift.

During my family-focus-hiatus from cricket, I’d missed an important evolution of the game of Cricket, with the English and Wales Cricket Board in 2003 formalising a new format of the game called Twenty-20 or just T20. Test Matches ran for five days, ODIs for one Day (50 overs per side) and T20s for about 2.5 hours (20 overs per side). I was skeptical at first but when I took Ben to his first cricket match at the Gabba in January 2018 I was shocked at how many people were there. It was called the BBL or Big Bash League and the Brisbane team were called the Heat.

T20 was fun, but brief and in subsequent years (not much during COVID) we went with my Brothers in Law, my daughter and this season my oldest son also came along with the Brisbane Heat winning the season for only the second time in the 13 years BBL had been running.

Despite this, it wasn’t the Australian team and it wasn’t a test match…but that was about to change. In an unusual split Test match season in Australia, Pakistan played three matches against Australia and the West Indies were scheduled for two, with the final Test Match of the 23/24 season at the Gabba and it was a Day/Night Test Match - only the third ever Day/Night Test to be played at the Gabba and the 22nd Day/Night Test Match played since 2015.

We bought Twilight tickets for the first day since it was a work and school day and arrived 30mins into the second session. The atmosphere was very different to the BBL with the fielders on the boundary “playing” with the crowd and some of them taking breaks between deliveries to sign autographs on the boundary line.

Nathan Lyon motioning the crowd: I Can’t Hear You!

Nathan Lyon motioning the crowd: I Can’t Hear You!

Usman Khawaja talking to the crowd

Usman Khawaja talking to the crowd

Nathan Lyon signing Autographs between deliveries

Nathan Lyon signing Autographs between deliveries

Day 2 we had seats out of the sun but it was quite hot and Day 3 was even worse, with our seats in the sun for nearly 2 hours, the hottest day this summer in Brisbane. Then at about 7pm I started feeling off, running a temperature and my progressive cough that I’d been trying to ignore during the afternoon got a lot worse.

We made it home safely but I felt horrible and whilst it wasn’t COVID I was unable to go to the final day we had tickets for and nor could my daughter as we were both sick. My oldest son then offered to take Ben on the fourth and likely final day and unfortunately for Australia we were bowled out, only 9 runs from the total we were chasing. The West Indies team were debuting so many new players in this team including an up and coming Fast Bowler Shamar Joseph. He’s 24 years old, used to work as a security guard and in the second innings took 7 Australian wickets despite an injured toe. We’ll be seeing more from this man in coming years I have no doubt.

Ben however stayed for the presentations after the game and not only did he finally get autographs for his signing bat, including that of Shamar Joseph, he had a few minutes chatting with Glen McGrath

We’d grabbed some merch, watched some amazing cricket and finally, finally at 47 years old, I’d finally made it to a test match and watched my country play against the West Indies right in front of my eyes. When I watched the test match series of Australia Touring the West Indies on TV some 33 years ago, I never thought I would see them play in person, but now I have. It was AWESOME.

Is This The Show Podcast

A group of internet friends that have known each other for years and podcasted together on different shows over the years, got together and created a new podcast entitled Is This The Show?.

It’s not meant to be a super-serious show and yes we’ll talk about things we like including anything from technology, podcasting itself, Apple, open-source, social media or just anything of interest. We’re aiming to record fortnightly and we’re tackling this very differently from previous shows where we each rotate edits and posts.

Due to our shifting life schedules, we have a core group of five people that will be on the episodes, but normally only three per episode. To date thus far we’ve released two episodes:

Episode 1: Socially Tolerated Episode 2: Your Closet’s Bigger Than Mine

You’ll hear myself, Scott Willsey, Clay Daly, Ronnie Lutes and Vic Hudson depending on who’s about that fortnight. ITTS is not part of TEN, it’s a casual side project I’m a part of.

Hope you enjoy it.

Leaving LibSyn

I joined LibSyn for Pragmatic back in 2014 when I left FiatLux/Constellation to take the show Indie. Between 2015 and 2017 I left LibSyn and tried BluBrry, then hosted my own files on a server, before returning to LibSyn again.

I originally left due to cost concerns. LibSyn required that you have subscription for every unique podcast however with 6 separate podcasts at that point, many of which I wasn’t producing content for regularly, just maintaining them and following LibSyn’s rules was costing a fortune.

At the time I was still taking sponsorships and advertising and frankly, LibSyn’s statistics were the best and met my needs at the time. When I returned however I restored Causality and Pragmatic, and cheekily added audio files from all other shows as direct download files incrementally to Pragmatic…risking LibSyn’s wrath were I to be caught out. They didn’t call me on it in the end.

Ultimately though I wasn’t using any of their RSS Feed functionality after a failed flirtation with the “Causality App” they were hyping at the time. I was using LibSyn essentially for statistics and file storage - nothing more.

Three things happened over the space of 2 years that made me decide to finally pull the pin on LibSyn, and likely any podcast host in future:

- The new User Interface (LibSyn Five) no longer included a File for Download Only option - everything had to be linked to an episode.

- A fluffy reason: LibSyn’s attitude about having been the longest-running podcast host was wearing really thin. Some of their senior leaders were proving they weren’t nice people either, and really rubbed me up the wrong way. (I’ll let you Google that yourself)

- Statistics could now be openly and professionally tracked using the OP3 project. Causality Stats

I’m not normally one to include “fluffy-feely-reasons” but the trend I’ve seen in LibSyn’s behaviour on “The Feed” podcast led me to stop listening back in 2021, and their attitude of having been the longest-standing podcast host meaning they understood the landscape the best, was less and less true with every passing week. It was frankly becoming embarrassing to even mention their analysis of pretty much anything.

The LibSyn product (interface) was being redesigned in v5 to compete with others in the marketplace insofar as it was more aimed at newbies/non-tech users (which is fine) and that wasn’t for me. Increasingly this is the case for all podcast hosts - the more technical of podcasters need not apply. That’s not a judgement, but it is a trend.

Finally if I could choose to spend about $10USD/m on hosting/statistics then I’d rather support an open project and file storage of my own choosing/control than to continue to support LibSyn.

Having spoken with my friends in the PC2.0 space, I shifted my Media to an S3-compatible storage bucket, backed by CloudFlare’s CDN and tested to confirm download speeds were as fast as LibSyn. Those tests ended a few weeks ago and now I’ve left LibSyn - this time for good.

Testing Whisper Transcriptions

In my efforts to support the Podcasting 2.0 initiative, I’ve had a stab at developing transcripts for Causality. I first started on this before PC2.0 back in 2018 and again later that year where I was using Dragon Dictate with only very average results.

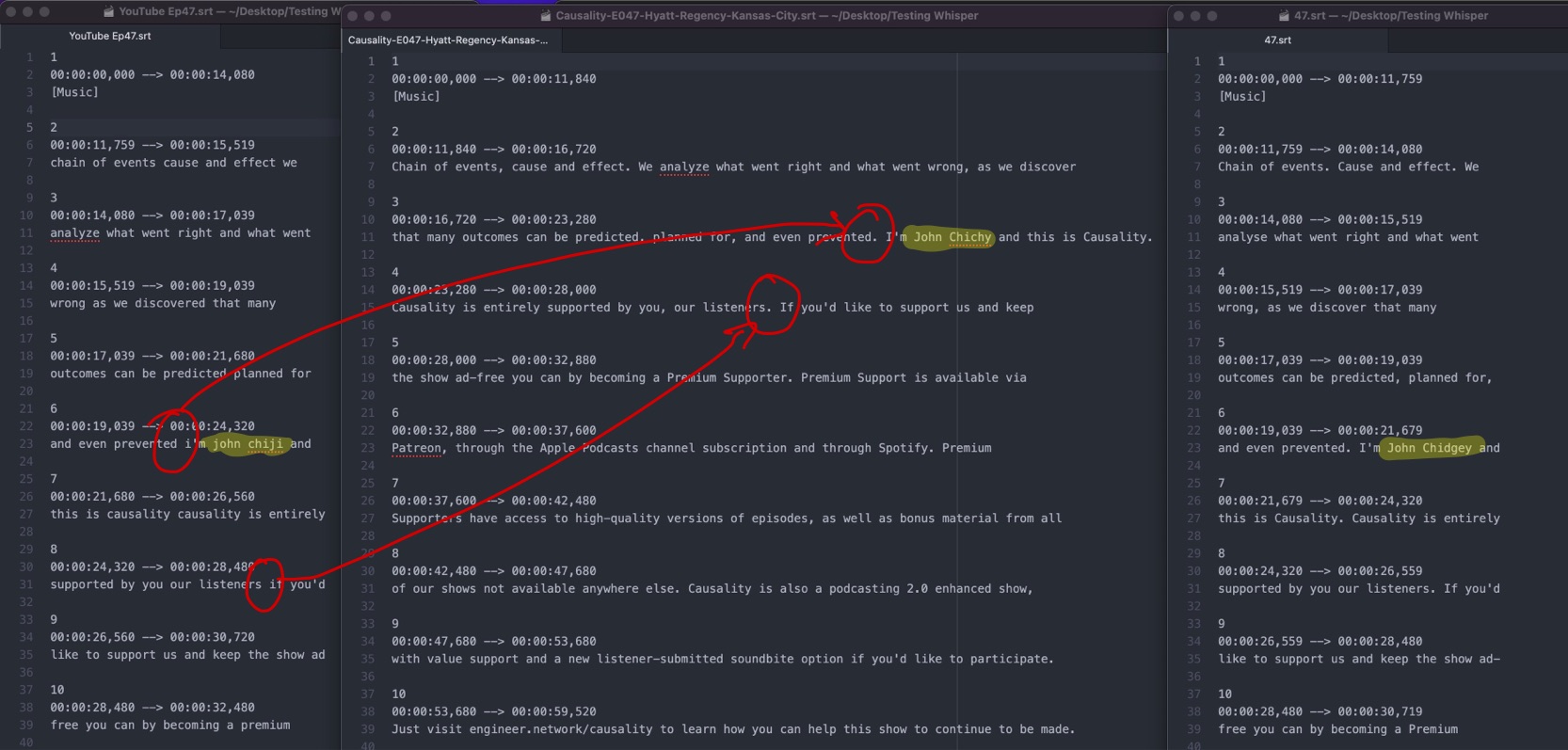

I then went on and tried YouTube, since via LibSyn I’d been publishing YouTube auto-generated videos for Pragmatic and Causality episodes for several years, and I realised I could extract the SRT files from YouTube. They were substantially better but still had issues that required a significant amount of effort to bring them up to a standard I would accept on TEN.

Using Subtitle Studio I was able align, tweak and correct the files then publish them to the site. All the plumbing has been built so all I needed to do was add the SRT file and job done.

Unfortunately however, the process of correcting the numerous errors in the YouTube SRT was monstrous. A 45 minute episode would take me 4-5 hours to fix all of the SRT errors. I edited Episodes 3, 11, 17, 18, 22, 31, 32, 35, 36, 47 and simply burned out. I lost many weekends, long nights and the pay off was precisely zero. No listeners gave any feedback of any kind either way and so…I stopped bothering.

Irrespective of whether you think I should just put up rubbish transcripts anyway, or how you choose to interpret the legal requirements for posting transcripts in your specific country, I still wanted to do this…so when I saw people discussing an OpenAI derived project MacWhisper, I gave it a shot. The results were, frankly, transformative.

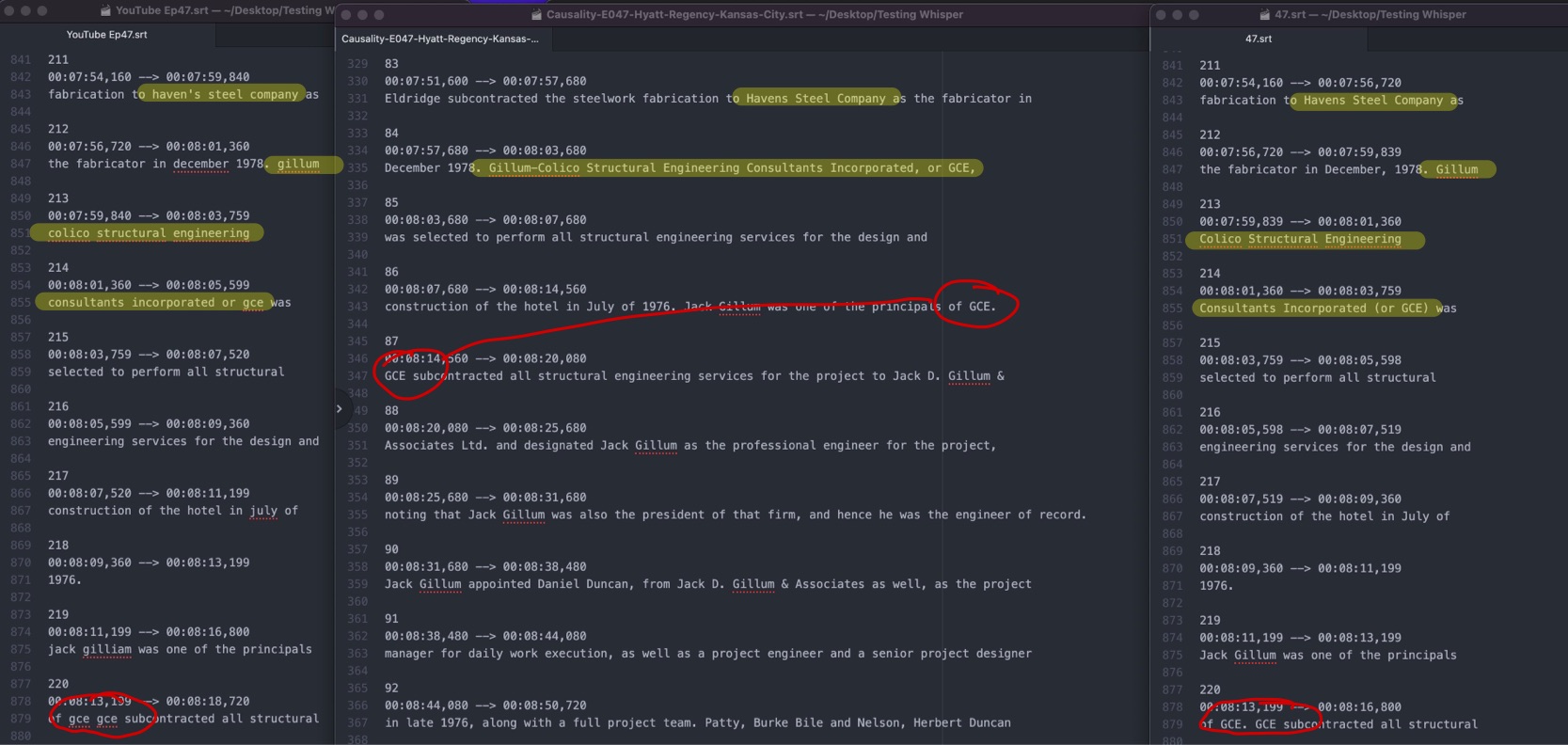

The screen shot on the Left is the YouTube SRT, the middle is Whispers, and the Right is my corrected YouTube SRT. In the above comparison note that with a dodgy name like “Chidgey” no-one gets it right except me. That’s fine. It’s a cross I have to bear I guess and that’s okay. The first point of note: Whisper nails the punctuation almost every time! For every missed Full Stop, there’s a missed following capitalisation. That’s a huge time saver!

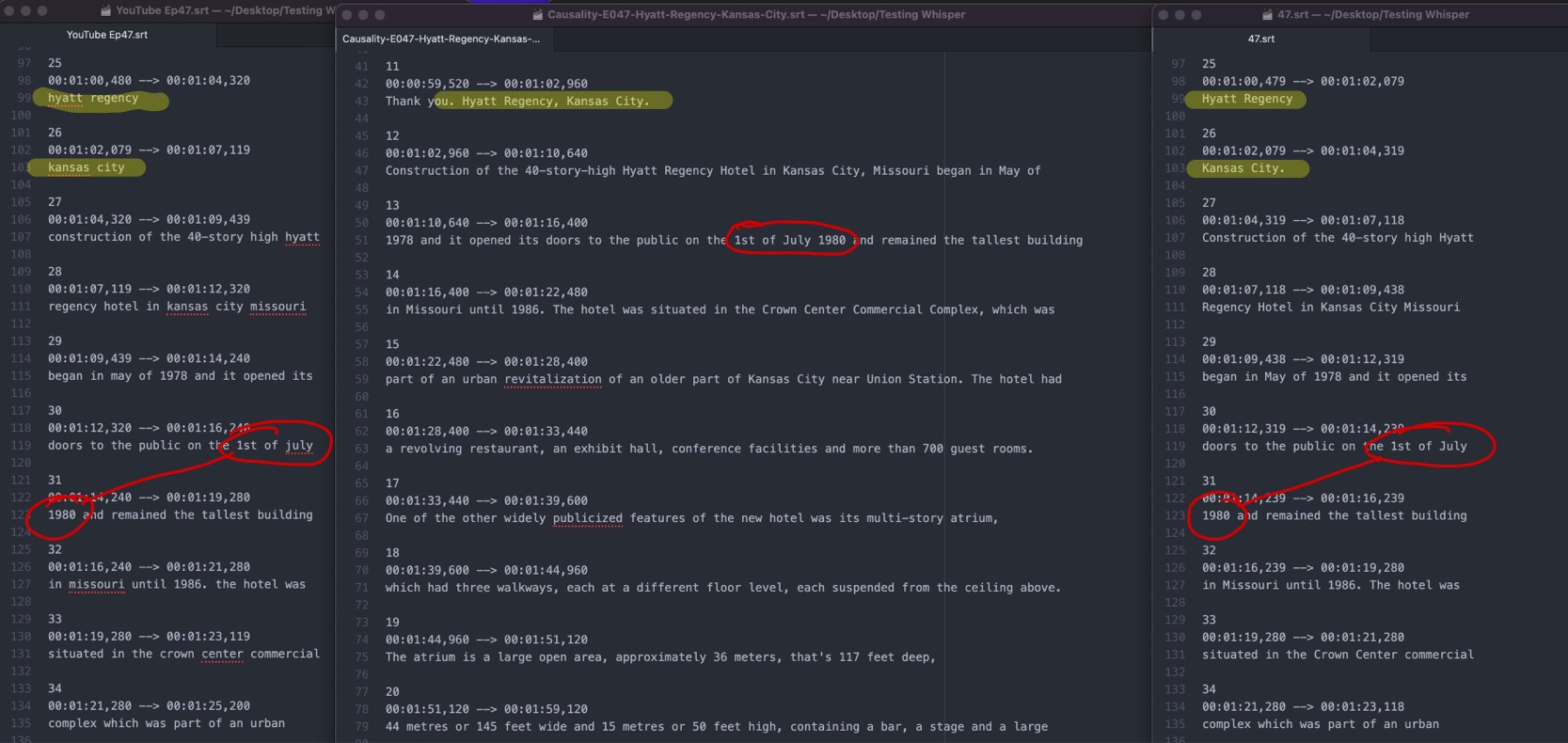

Again Whisper nails the capitalisation of the correct words - in this example above, the title of the episode and it also inserts a comma which arguably I missed in mine. I also like how Whisper capitalises the date for me too. Not too shabby.

Here’s where I lost so much time in the past with the YouTube SRT: Company names. Not only did Whisper get the capitalisation and punctuation correct (Yellow Highlight) the only tweak I would add is adding parenthesis around “or GCE” but otherwise perfect! Again, Whisper nails the capitalisation of GCE (the company name) and the punctuation between sentences. Amazing!

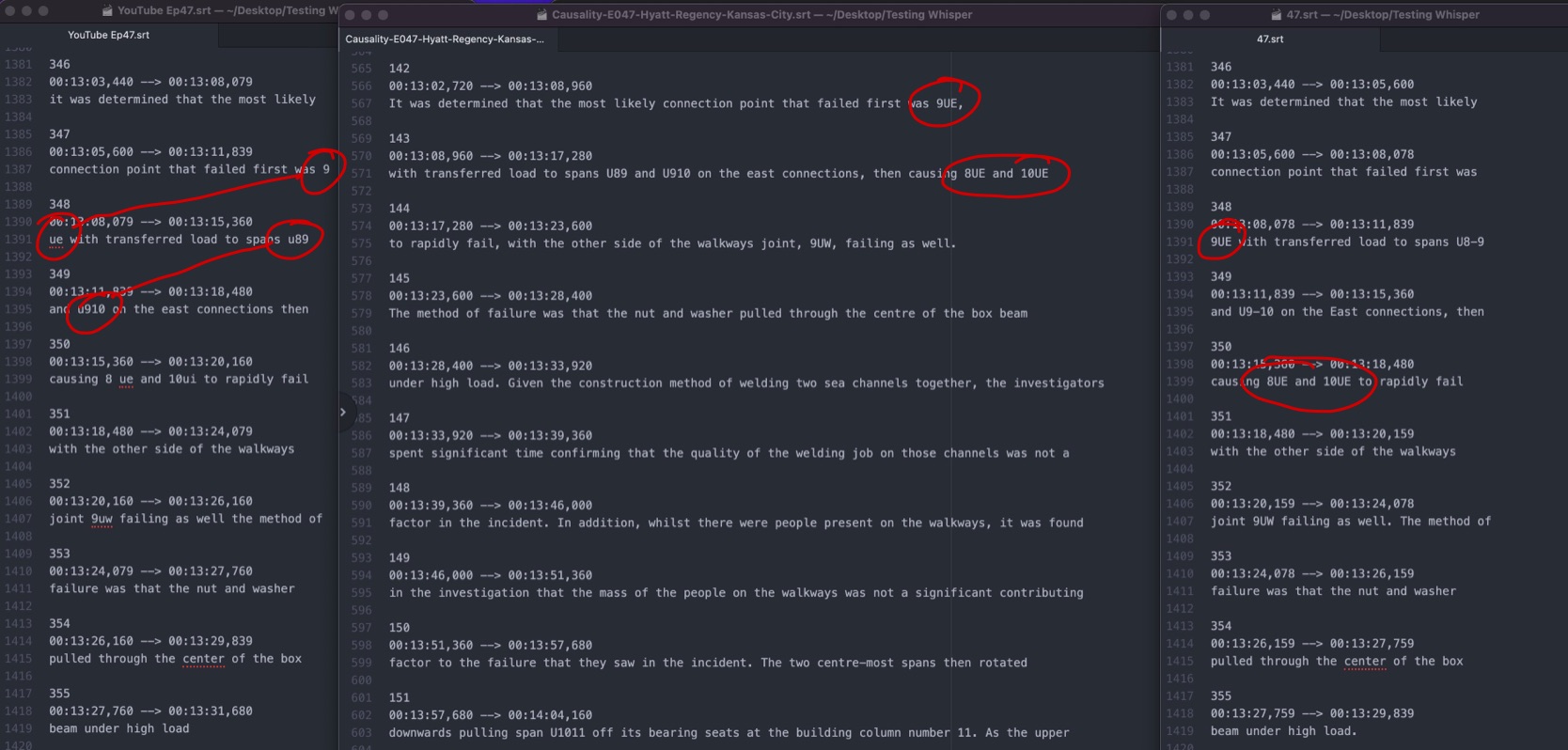

The last and hardest part now is the technical terminology which is always a struggle for AI transcripts. YouTube put 9 ue when it should be 9UE, but Whisper nails that once again. YouTube does get the next two correct but didn’t capitalise 8UE and 10UE where Whisper did.

With the above results I’m just blown away and last night I began transcribing every Causality episode using MacWhisper on the Best accuracy model (2.8GB) to get the best result I can. I’ll work my way through the back catalogue but hopefully the editing times for SRTs will be significantly reduced and I can finally post word-accurate transcripts to TEN.